Written by: Mica Jorgenson

“How do you find the time for a DH project on top of your Dissertation?” This is the question I get asked most frequently as a historian with a digital project. At this point in the discipline, DH does not replace traditional types of publication on your CV, so anything I do with DH is in addition to the usual publications expectations of my field.

Given the circumstances, its probably not surprising that I am on a constant hunt for time/labour saving techniques. As I’ve tried to become more efficient I’ve noticed that pure data management (before I even have anything to show) takes up a huge chunk of my time. In fact, according to the 2017 “Get Down with Your Data” DH@Guelph Workshop description, its about %80 of each project.

Since last year’s DH@Guelph Workshop Series, I’ve become much better at making maps. But data management often bogs me down. For example, while working on Tableau (the subject of my last post for the SCDS), I spent an embarrassingly long time trying to figure out how to get my data to re-arrange itself into a way that could be read by various mapping software. In flow mapping, ArcGIS is totally fine with four separate columns (origin lat, origin long, destination lat, destination long), but Tableau must have only two columns in which destination appears directly below origin for each mapped item. QGIS, the biggest fuss-pot of them all, needs a GEOM string in the last column of the table in which origin lat and long appear with destination lat and long separated by a comma. It is largely for this reason that QGIS has never appeared in my blog posts. I refuse to do that kind of work manually.

In “Get Down with your Data” Adam Doan, Carrie Breton, and Lucia Costanzo (instructors) promised to introduce me to a variety of data-wrangling techniques including HTML & CSS, webscraping, data management, GIT/GIThub, API, OpenRefine, and Tableau. All tools, in my mind, of cutting down on the total time spent monkeying messy data.

I attended “Get Down with your Data” at DH@Guelph May 8-11.

Webscraping, OpenRefine, and Tableau had a kind of logical flow to them – they built on each other over the course of the week. The other tools, although maybe useful in the future, felt somewhat tangential to these core three.

Here’s how it went.

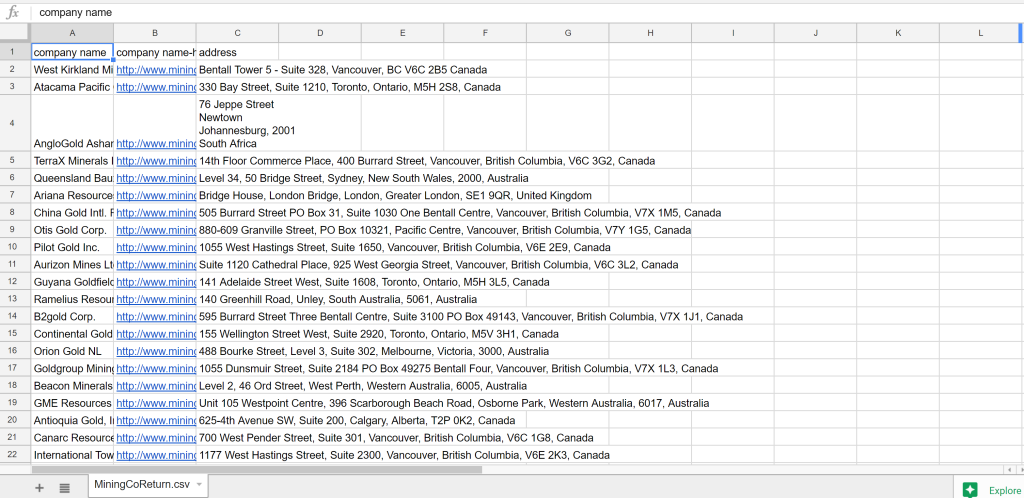

On Monday I learned to webscrape using a Chrome extension. We practiced on a wikipedia page, but when it comes to mining (my area of interest) wikipedia is sadly lacking. I guess mining industry types are not the same types who like writing wikipedia articles. So after I grasped the basics, I found a website listing every modern, publicly traded mining company in the world with a link to their address. And scraped it. At the “polite” speed, the scraper took about 5 minutes to gather 211 rows of data. Here’s what it looks like.

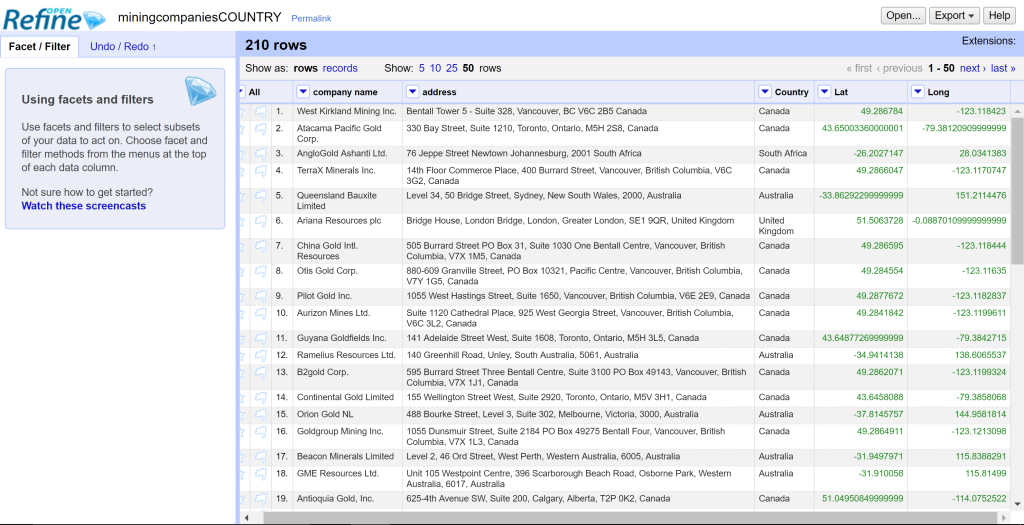

On Tuesday, between other projects, I used OpenRefine’s AMAZING geocoding process to get latitude and longitude from the addresses via google maps. Note that some of the addresses were messy – they had things like “level” or “suite” which google could not find. Although I could have cleaned these with GREL, there were so few of them that I just did them by hand.

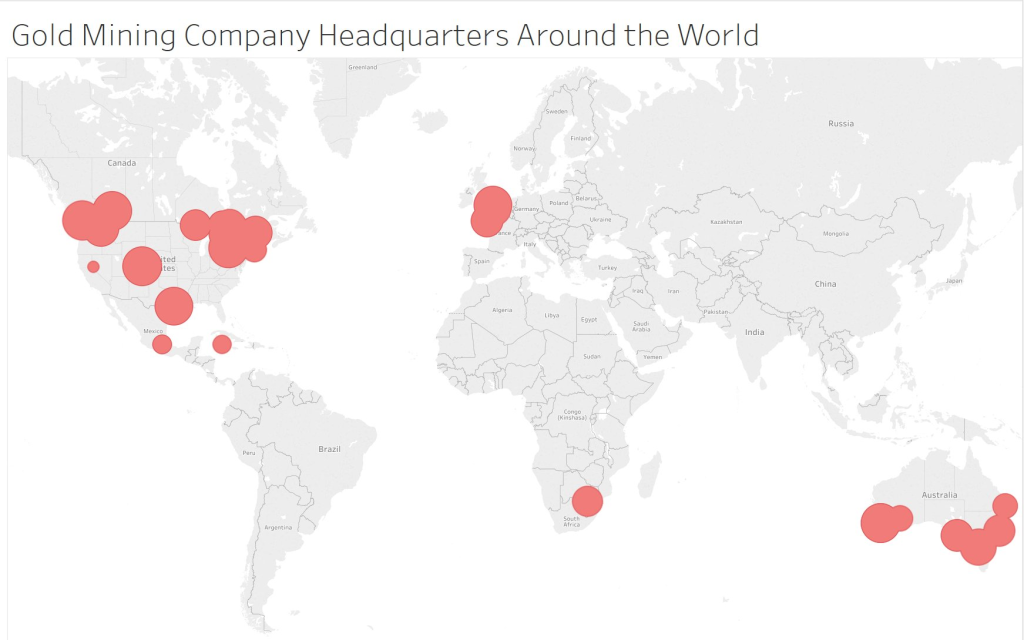

On Wednesday, getting ahead of the class a bit, I dropped the results into Tableau. Tada! Mapped, the data show how most HQs are located in Canada, the US,and Australia.

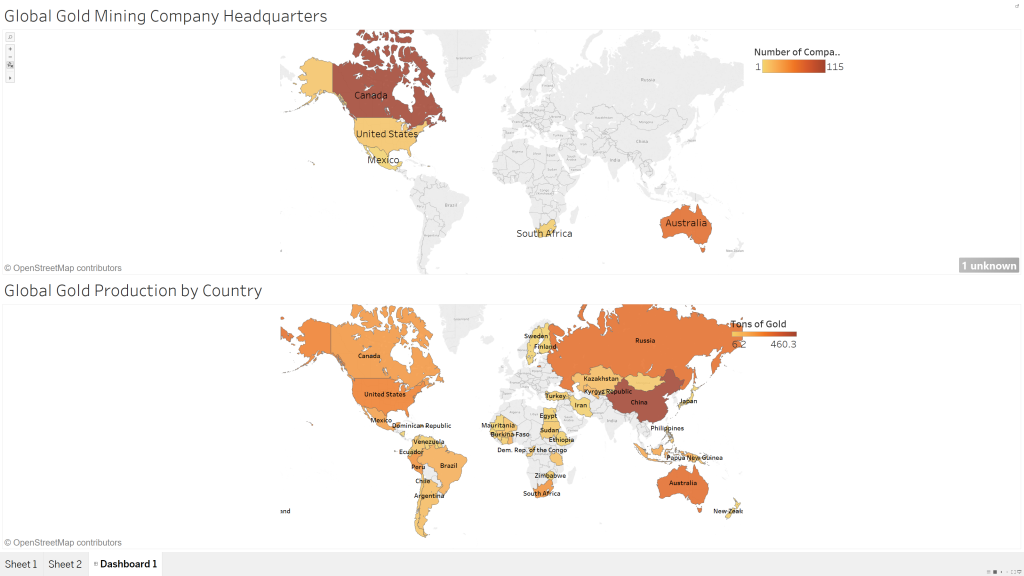

On Thursday, I decided it would be interesting to compare the sites of mining headquarters with the places gold is actually produced. I found data, scraped, cleaned it, and mapped that too. While I think that there are probably flaws (Tableau for some reason did not recognize “United Kingdom” or “Cayman Islands” as countries, so they’re not mapped) it does show some interesting trends. For example, in Canada we have a disproportionate number of company headquarters in comparison to the amount of gold we produce. Canada is well-known to be friendly to mining companies, and there are some specific financial and legislative advantages to headquartering here.

These screencaps are purely experimental fluff – data I gathered and manipulated simply as part of the learning process. But now that DH@Guelph is over, I am using OpenRefine and Tableau all the time for my regular research. Recently I’ve been doing a lot of work with Ontario Climate Data using my new skills – the subject of my next blog post, so stay tuned! More generally speaking, stuff that took me hours to figure out in excel tutorials takes a matter of minutes in OpenRefine. I still don’t really understand GREL, but luckily the documentation is massive and very helpful. Tableau is a much simpler alternative to ArcGIS and even ArcOnline. With a combination of OpenRefine and Tableau its a matter of minutes to pump out content for social media or blogs or just to get a handle on the data for myself. And the best part is that its totally free for students!

And so, with another year of DH@Guelph under my belt, I’ve added some powerful new tools to my existing kit. Some things I won’t use again (we covered APIs too quickly for it to stick),but much of it I will. Although I still don’t have an answer for the question “how do you find the time?” I’ve become a lot more skilled at data wrangling: Silly tasks like re-arranging spreadsheets consumes less time and energy. As a result I am more effective in my engagement with my data and more productive as a DH scholar.

And, for the record, I was able to create a GEOM linestring for QGIS (finally) using OpenRefine. Unfortunately QGIS still didn’t like it. Just for laughs, here’s what it spit out.

I guess there are still things to learn!

Special thanks to the McMaster Department of History, the Wilson Institute, and the Sherman Centre for Digital Scholarship for funding graduate student registrations for DH@Guelph 2017. Also thanks to the instructors and organisers at DH@Guelph for being so patient and for making it all possible!

Leave a Reply