Written by Katherine Eaton

Why is it so hard to get data, when data is everywhere?

We live in the Golden Age of the Internet, with millions of web-pages instantly accessible to provide a steady stream of services and entertainment. Not only has this radically transformed the academic publishing process, but it has also given birth to entirely new fields of study. Oceans of digital data on human behaviour lie in plain sight on the free web for those willing to adventure into the unknown. And yet…

Why is it so hard to get data?

This is a question I ask myself constantly, and so it formed the inspiration for this blog post. My research relies heavily on data that is stored on the internet. One site I work with frequently is the National Centre for Biotechnology Information (NCBI), an expansive repository for biological data. The web browser interface is particularly good for honing in on interesting records and zooming in on the particular characteristics of an individual sample.

Except like many internet researchers, I don’t tend to work on the scale of a single sample or data point. My work involves analyzing disease outbreak records, often on a global scale and this can result in needing to process thousands of records. And manually clicking through 1500 web pages is not what I consider to be a feasible solution.

This is about the time that I discovered the importance of learning to use an API (Application Programming Interface). Large institutional sites will often provide a way for developers or researchers to get more direct access to the underlying data. Therefore bypassing the site’s graphical interface which, while beautiful, is an obstacle in the path to acquiring stripped-down and simplified datasets.

But the stripped-down data is also hard to work with. The format can be very ‘ugly’ for human eyes (XML) when instead we might want something more visually digestible (table). This type of data transformation is certainly not straightforward and can require fairly advanced programming skills. And so I also found myself asking…

Why is it so hard to organize data?

I’ve realized that “organized” is a very subjective term. How I would preferentially store information is not necessarily the same as my collaborators, let alone the people who designed the site and created the API. But there were at least a few basic elements that I knew I could improve upon.

And so we arrive at my summarized problem: How do I efficiently pull information from a target website, transform it into a new format, and store it in a way that I can easily update it, and put it to work effectively? Eventually, this issue annoyed me enough that I began to want to tackle this data access problem just as much as I wanted to tackle real-world problems with the data I acquired.

And thus a digital project was born.

Through the graduate residency program at the Sherman Centre, I am developing software that implements a solution to this problem of data accessibility and representation. Over the past few years, I have been working on a software application that can connect to an online repository, retrieve comprehensive information based on a user’s specific needs, and reformat this data for research use.

Currently, I am focused on how to disseminate and adequately support this software tool. Which led me to my first problem through this graduate residency: How do you publish academic software? What new skills do I need to acquire to do so, and how does this differ compared to ‘traditional’ paper publishing?

To start with, I am spending the first half of this residency realizing the differences between being a Programmer and a Software Developer. Once my code leaves the safe environment of my own computer and ventures out into other people’s machines, truly unexpected things happen. I am working hard to learn the new skill sets required, including figuring out how to run tests across different operating systems (without physically owning these machines) and tackling an unexpected amount of web development/website creation hurdles.

Following publication, my second goal for this residency is to put this tool to work. In my dissertation research through the department of anthropology, I analyze biological data stemming from disease outbreaks, both historical and modern. In the second half of this residency program, I’ll be harnessing the power of my software to locate and acquire publicly available datasets that have never been synthesized in the literature before.

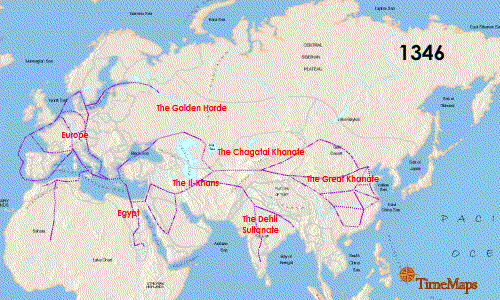

One application I’m looking forward to is reconstructing the timing and spread of a disease (the Plague!) throughout some of the most infamous outbreaks in history, like the Black Death. Specifically, I’m looking to put together a digital exhibit which can combine interactive visuals with narrative text. I’m drawing inspiration partially from digital storytelling applications, like StoryMap and Nextstrain Narratives.

Nextstrain Narratives: 20 Years of West Nile Virus in the Americas

I’m hopeful that using digital methods like these to organize and present information, will also open up new collaborative opportunities to weave together pieces of evidence from diverse fields like history, archaeology, genetics, and ecology.

As I learn many new tools for acquiring and manipulating information, I still occasionally find myself asking: Why is it so hard to get data? But now I also ask: Why did I expect it to be easy?

The internet can often present an illusion of accessibility (just a click away!), instant access (responsive apps), and standardization (I’ve seen this web template before…). Over time, I’ve let myself be lulled into the false expectation that internet research should be quick and simple, just like our user experience is. It’s easy to forget that the internet is not some vast machine, when it is instead the product of individuals creating content tailored to their own interests and needs. It will always be hard to get data, but hopefully through the efforts of many small projects like my own, it won’t feel quite so impossible.

Leave a Reply