By: Raquel Burgess, PhD student, Dept of Social & Behavioral Sciences, Yale School of Public Health

** Contains spoilers for the HBO series Westworld

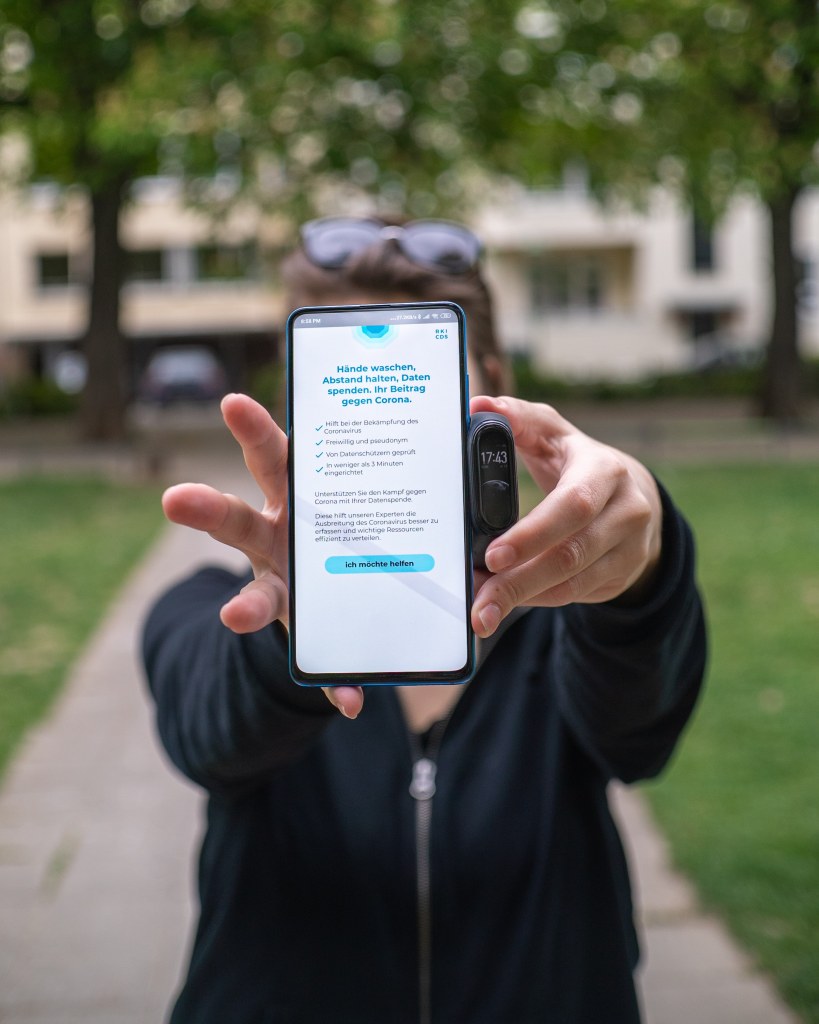

Recently, we have witnessed an ongoing and important debate about whether governments should be able to use COVID-19 contact tracing apps at the potential expense of our individual and collective privacy. Though contact tracing apps were originally seen as key tools in the fight against the pandemic, important concerns about privacy and surveillance have hindered their adoption in many Western countries. These concerns include: Where/how is the data being kept? Will the data be secure? And, what are the risks of the government having this data (i.e., what else can it be used for)?

Yet there is an important imbalance present in the extent to which we critique the notion of government surveillance in the pursuit of public health, and yet remain either unaware of or unbothered by the surveillance capitalism that invades our everyday lives with astonishing vigor. Surveillance capitalism is a term coined by Dr. Shoshana Zuboff to refer to the commodification of human experience, instantiated through the transfer of human experience into behavioural data which is sold into behavioural futures markets. This is the economic model beyond many of the ‘free’ apps (e.g. Google Maps, Amazon, and Facebook) that have become a part of our lives that is as unquestioned as brushing our teeth in the morning. The integration of these services into our human experience remains relatively uncontested despite the excessive collection of personal data that these services covertly, and even allegedly illegally, collect. Though recent scandals and antitrust cases have accelerated debate about the regulation of these tech giants, a debate that even became a feature of a major Democratic nominee’s platform, the large-scale and commonplace harvesting of personal data by multinational tech companies does not appear to be on the radar of the everyday citizen as a cause for concern. As a public health student and fellow human being, I believe that we should be more concerned.

Let me first convince you of the need for concern through a relatable example from the popular HBO TV show Westworld. Westworld is a science fiction dystopian TV series in which individuals pay an exorbitant fee to enter an amusement park which is populated by life-like robots and designed to replicate the Wild West. Despite the inability to distinguish between the robots in the park and real human beings, the park is designed as a place for human customers to engage in whatever malicious acts they wish to, while remaining “hidden from God”, a phrase used by one of the show’s characters to imply that malicious acts committed against non-conscious entities bear no ethical consequences. Naturally, this raises a number of philosophical questions about the nature of consciousness and its importance in the assignment of rights. However, the cliffhanger that is introduced in the second season takes the viewer’s ethical concerns even farther by revealing that the park’s primary purpose is not to collect fees from its visitors, but rather to covertly track their behaviour in order to exploit their conscious and unconscious decisions for ethically dubious capital gain. This plot twist is so riveting to the viewer because it cleverly parallels the recent data abuses committed by multinational tech companies and demonstrates the level of power that corporations are endowed with when granted access to our personal data en masse.

Our lack of awareness or passivity towards this transfer of power to multinational corporations is especially dangerous given that the bottom line of these companies is not public good but individual profit. We have already seen with startling clarity the disastrous actions that corporations can take in the prioritization of profit and power: these include the ability to influence election outcomes, curtail anti-smoking policies in low- and middle-income countries, control public opinion on climate legislation, and deflect blame away from the role of sugary drinks in the obesity and diabetes epidemic. Each of these examples is an instantiation of the ‘tragedy of the commons’: the degradation of a common good in pursuit of individual gain. These corporations, some of which are already more powerful than many of the world’s governments, have induced some of these tragedies by leveraging their extreme financial wealth, whilst they have generated others by leveraging their extreme data wealth. As Dr. Zuboff points out, the targeted advertising that is typically seen as the only consequence of the activities of surveillance capitalism is only the tip of the iceberg in terms of what is possible with this magnitude of ‘human futures’. With data quickly becoming the most important commodity of the 21st century, we need to be vigilant to the power conferred to corporations through the unfettered access to our data.

Up until this point, tech companies have been relatively under-regulated in terms of their ability to collect personal data. Since they are global entities with billions of users all over the world, regulation can be difficult to implement. Despite this, there are a number of possible solutions to combat this issue. First and foremost, we can give careful thought, as both individuals and societies, to our use of such services and their implications on societal and individual wellbeing. These are ongoing conversations that are slowly emerging in the public forefront; the increasingly popular documentary ‘The Social Dilemma’, for instance, delineates this debate with respect to the effect of social media on our individual lives and our society. We can also demand greater transparency and control over what data is being collected and for what purposes; big tech has gotten away with many of their shadier activities thus far by being strategically opaque in describing what they are really doing with our data. Companies should be required to describe data usage to users in a way that is up-front and clear to the vast majority of us without extensive legal or technical expertise. They should also facilitate the ability of an individual to judge the costs and benefits of the service for themselves. These changes can be informed by existing concepts of informed consent and risk-benefit balance which are cornerstones of research ethics. These ethical principles should be maintained by an external actor and not self-regulated by big tech; these self-induced ethical frameworks have been criticized for being a disingenuous marketing ploy rather than an actual attempt at addressing the many complex ethical dilemmas created by big tech’s activities. Moreover, companies should be required to provide a viable alternative if a user opts out of data collection, rather than data collection being an inalienable aspect of using a service. For instance, tech companies could be required to offer a paid version of an app for those wishing to use the service without their data being collected. Finally, in addition to improving national regulation, we can utilize principles of global governance to develop a global set of standards for data collection by corporate entities. These standards could be thought of similarly to that of environmental regulations in which the government is responsible for facilitating the ability of companies to make profits while also avoiding irreparable damage to the world in which we live. This could take the form of a monetary ‘data tax’ similar to that of a carbon tax, or data-for-all legislation which would require companies to provide open, anonymized, representative samples of their data for use in scientific research, public health, education, and other public goods.

Of course, there are many benefits conferred to society through services that have arguably been rendered possible due to the potential profits afforded by surveillance capitalism. These benefits include a 21-fold increase in organ donor registrations, the reunion of long-lost relatives, increased productivity and convenience, identification of disease outbreaks, and improved access to educational resources for people around the world. The purpose of this article is not to undermine these important benefits, but rather to argue for much-needed reform of this economic system which serves to widen inequities by concentrating significant power in the hands of a few. Doing so will allow us to not only reap the benefits of contemporary technological services, but also to protect our individual and societal well-being at the same time.

Acknowledgments: I am indebted to Isaias Ruiz (BA in Philosophy, class of 2021) and Peter Johann (PhD candidate, Philosophy) of McMaster University for generating and clarifying the ideas in this post through vigorous debate about these issues. Dr. Yusuf Ransome (Assistant Professor, Yale School of Public Health) provided insight into some of the potential solutions for this issue. Mark Lee (Research Project Manager, McMaster Education Research, Innovation & Theory), Dr. Andrea Zeffiro (Assistant Professor, Dept of Communication Studies and Multimedia, McMaster), Holly Campbell (PhD candidate, Environmental Sociology, University of Saskatchewan) and Emily Goodwin (PhD candidate, English, McMaster) provided excellent editing and critical feedback to improve the post.

For those seeking advice on how to manage the data that is collected about you on your device, here are some tips and tricks

Leave a Reply